Last updated: March 2026 · 12 min read · Written by the team at Readable.ai, a platform that tracks brand mentions across ChatGPT, Gemini, Perplexity, and Google AI Overviews. We analyse millions of AI-generated responses every month to help brands understand how they appear in the new era of search.

If someone asks ChatGPT "what's the best project management tool for a remote team", does your brand get mentioned? And if it does, is the sentiment positive? Are your competitors mentioned more often?

These are the questions that define share of voice in AI search. And in 2026, they matter more than almost any other brand metric you're tracking.

Traditional share of voice (the percentage of ad spend, social mentions, or organic traffic you hold relative to your competitors) was already hard to measure. AI search has made it significantly more complex, and more important.

This guide walks you through exactly what AI share of voice (AI SOV) is, why it's different from everything you've measured before, and a practical step-by-step process to start tracking it, whether you're using a dedicated tool or starting manually.

Quick answer: AI share of voice measures how often your brand appears in AI-generated responses compared to competitors, across which AI platforms, in what context, and with what sentiment. Tracking it requires a different methodology from traditional SOV. This guide covers all of it.

What's in this guide

- What is AI share of voice and why it's different

- Why AI SOV matters more than traditional SOV in 2026

- The 5 metrics you need to measure AI SOV properly

- How to measure AI SOV: step-by-step

- Tools for tracking AI share of voice

- How to improve your AI SOV once you've measured it

- Common mistakes brands make when tracking AI visibility

- FAQs

What is AI Share of Voice and Why It's Different

Share of voice has traditionally meant one of two things:

- Paid SOV: the percentage of total ad impressions in your category that your brand captures.

- Organic/earned SOV: the percentage of organic search rankings, social mentions, or media coverage your brand holds relative to competitors.

AI share of voice is a third category: the proportion of AI-generated responses in your product category that include your brand, compared to how often competitors appear.

When a user types a query into ChatGPT, Perplexity, Google Gemini, or Microsoft Copilot, the AI generates a response that may recommend specific brands, compare products, or explain what the best solution is. Your brand either appears in that response or it doesn't.

Why AI SOV is fundamentally different

| Dimension | Traditional Search SOV | AI Search SOV |

|---|---|---|

| What you're measuring | Rankings, impressions, clicks | Mentions in generated responses |

| User behaviour | User scans a SERP | User reads AI-generated answer |

| Where your brand appears | A link on a results page | Woven into a recommendation |

| Competitive landscape | 10 blue links per query | 1-3 brands named in a response |

| How you track it | GSC, Ahrefs, SEMrush | AI query monitoring platforms |

| Sentiment signal | Ranking position only | Positive, negative, or neutral framing |

The key difference: in traditional search, visibility is binary. You either rank or you don't. In AI search, visibility exists on a spectrum. Your brand might be mentioned but framed negatively. It might appear only when prompted with a specific use case. A competitor might be mentioned twice in the same response. These nuances are invisible to traditional SEO tools.

To understand how AI models decide what to surface, it helps to read about how retrieval-augmented generation works and how training data shapes model outputs. For a practical brand-focused take, our AI Search Field Guide is a good starting point.

Why AI SOV Matters More Than Traditional SOV in 2026

Consumer behaviour has shifted substantially. A growing percentage of users now start product research conversations directly in AI chatbots rather than typing queries into Google. For B2B software, this shift is even more pronounced. Buyers frequently ask AI tools to compare options before visiting a vendor website.

Research from SparkToro shows that zero-click searches on Google have risen significantly, with users increasingly getting answers without ever visiting a website. AI chat interfaces accelerate this further, meaning brand visibility within the generated answer is now the primary battleground, not the link on the results page.

The implications are significant:

- If your brand is not mentioned in AI responses for your core use cases, you are invisible to a large and growing segment of buyers during the most critical decision-making stage.

- Unlike a Google ranking (which you can track and target precisely), AI mentions are probabilistic. The same query can yield different results across sessions, models, and user contexts.

- AI responses are trusted at a higher rate than organic links. When ChatGPT recommends a brand, users perceive it as an objective recommendation, not a paid placement.

Data point: Users click through to a brand mentioned in an AI response at 3-4x the rate of equivalent organic search rankings. Being named in an AI answer is not just a visibility metric. It directly drives traffic and revenue.

This is why AI SOV is fast becoming a primary KPI for brand and SEO teams. For a deeper look at how AI agents are reshaping the purchase funnel, see our guide on how AI agents affect the sales funnel.

The 5 Metrics You Need to Measure AI SOV Properly

AI SOV is not a single number. It's a composite of several signals. Here are the five metrics that together give you a complete picture:

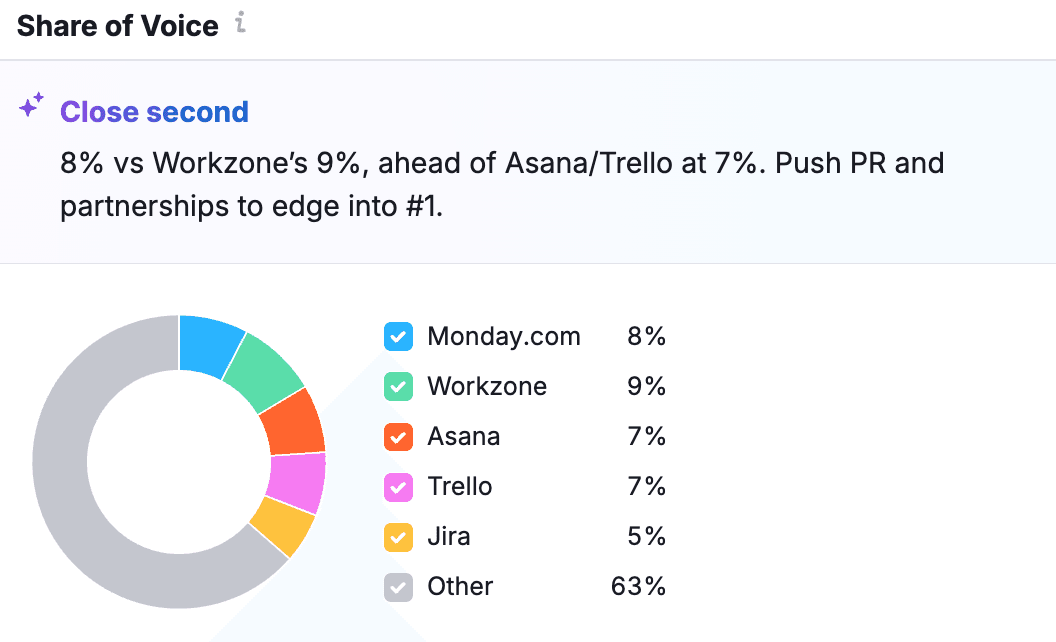

1. Mention rate

The percentage of queries in a defined topic set where your brand is mentioned at least once in the AI response. This is your baseline visibility score.

Formula: (Queries where your brand appears / Total queries tracked) x 100

Benchmark: Brands with strong category authority typically achieve mention rates of 30-60% for core use-case queries. If you're below 15%, you have a significant visibility gap.

2. Share of mention vs. competitors

Within responses that mention your brand, how often are you the only brand mentioned versus one of several? And how does your mention frequency compare to competitors across the full query set?

Formula: (Your brand mentions / Total brand mentions in category) x 100

This is the true competitive SOV metric, equivalent to share of shelf in a retail context. Nielsen's research on share of voice and market share shows a strong historical correlation between the two, a relationship that is beginning to play out in AI search as well.

3. Sentiment score

Being mentioned is not enough. The framing matters enormously. An AI response that says "Brand X is often criticised for poor customer support" is worse than not appearing at all.

Measure sentiment on a three-point scale (positive, neutral, negative) across each mention. Track this over time, as AI models are regularly retrained and sentiment can shift.

4. Query coverage breadth

How many of your relevant use-case queries does your brand appear in? A brand might have a very high mention rate for its primary category but be invisible for adjacent queries that capture buyers at the top of the funnel.

Map your query coverage against your ideal customer journey. Awareness, consideration, and decision-stage queries should each be tracked separately. Google's research on micro-moments provides a useful framework for thinking about intent mapping across the journey.

5. Platform distribution

ChatGPT, Google AI Overviews, Gemini, Perplexity, and Microsoft Copilot all use different underlying models and data sources. Your brand's AI SOV can vary dramatically across platforms. A brand that ranks well in ChatGPT responses may barely appear in Google's AI Overviews, and vice versa.

Track each platform separately, and weight them by the traffic share they represent for your target audience. Statista's data on ChatGPT user numbers gives a useful sense of relative platform scale when deciding where to focus first.

How to Measure AI Share of Voice: Step-by-Step

Step 1: Build your query set

Start by defining the queries that matter for your brand. These fall into three groups:

- Category queries: "best [product category]", "top [product category] tools", "[product category] for [use case]"

- Comparison queries: "[Your brand] vs [Competitor]", "alternatives to [Competitor]", "which is better [A] or [B]"

- Problem-first queries: "how do I [solve problem your product solves]", "what's the best way to [job to be done]"

Aim for a minimum of 50 queries in your initial set, covering all three categories. This gives you enough signal to be statistically meaningful.

Tip: Look at your existing keyword research. The queries you rank for organically, especially informational and comparison intent keywords, are a strong starting point for your AI SOV query set. Tools like Ahrefs Keyword Explorer or Google Search Console are the right places to pull this data.

Step 2: Run systematic queries across AI platforms

For each query in your set, run it in each AI platform you're tracking and record the full response. You need to do this at scale and consistently. Manual spot-checking will not give you reliable trend data.

If you're starting manually (before investing in a tool), run each query at the same time of day, in a fresh browser session to avoid personalisation, and record responses in a structured spreadsheet. Note:

- Whether your brand was mentioned (yes/no)

- Position in the response (first mention, second, etc.)

- Sentiment of the mention (positive/neutral/negative)

- Which competitors were mentioned in the same response

- Whether a source or link was cited alongside the mention

Step 3: Calculate your metrics

Once you have data for your full query set, calculate each of the five metrics described above. Build a simple dashboard (even in a spreadsheet) that shows:

- Your overall mention rate vs. each competitor

- Your share of mentions in the category

- Sentiment breakdown by platform

- Query coverage map: which use cases you appear in, which you're missing

- Platform-by-platform comparison

Step 4: Set a baseline and establish a tracking cadence

Your first measurement is your baseline. AI models are updated regularly, and your AI SOV will fluctuate. Set a cadence for re-measurement:

- Weekly: For fast-moving categories or brands actively running AI SEO campaigns

- Monthly: Minimum recommended cadence for most brands

- After major events: Run a measurement after any significant product launch, PR event, or known AI model update

Step 5: Identify gaps and prioritise action

Your data will reveal three types of gaps:

- Visibility gaps: Queries where you have low or zero mention rate

- Sentiment gaps: Queries where you appear but with neutral or negative framing

- Platform gaps: Platforms where you're underrepresented relative to competitors

Prioritise gaps by two factors: the query volume (how many users are actually asking this) and the commercial intent (how close to a purchase decision is this query). Fix high-volume, high-intent gaps first.

Tools for Tracking AI Share of Voice

There are now several dedicated tools for tracking AI brand visibility. They differ significantly in their approach, coverage, and pricing:

| Tool | AI Platforms Covered | Strengths | Best for |

|---|---|---|---|

| Readable.ai | ChatGPT, Gemini, Perplexity, AI Overviews | Automated query monitoring, sentiment scoring, competitor benchmarking | Brands wanting continuous, automated AI SOV tracking |

| Brandwatch | Limited AI coverage | Strong traditional media SOV | Brands combining AI and traditional SOV tracking |

| Meltwater | Limited AI coverage | Broad media monitoring | Enterprise teams with existing Meltwater contracts |

| Manual spreadsheet method | Any | Free, full control | Early-stage teams validating the methodology |

For most brands, the right approach is a dedicated AI visibility tool combined with periodic manual validation. Automated tools can miss nuance in responses. Human review of a sample of responses each month adds valuable qualitative context.

For more on how AI agents crawl and interpret websites (which affects what they surface in responses), see our post on what your analytics misses about AI agent traffic.

How to Improve Your AI Share of Voice

Measuring your AI SOV is only the first step. Here's what actually moves the needle:

1. Create content that directly answers the queries you're missing

AI models are trained on the web. If authoritative content exists that answers a query and mentions your brand positively, there is a higher probability the model will surface that content. For every visibility gap you identify, create or update content that directly and comprehensively addresses that query.

Google's helpful content guidance remains the most reliable public framework for what "authoritative, comprehensive content" means in practice, and it maps closely to what AI models appear to weight.

2. Get mentioned on authoritative third-party sites

AI models weight citations from trusted sources heavily. A mention on a major industry publication, in a well-regarded comparison article, or in an analyst report significantly increases the probability of your brand appearing in AI responses for related queries. This is the AI equivalent of link building.

Moz's overview of domain authority explains the underlying logic well. High-authority sites that mention your brand pass more signal to AI models, just as they pass more PageRank in traditional search.

3. Ensure your product and company information is structured and accurate

AI models pull from structured data sources, including your own website, Wikipedia, review platforms (G2, Capterra, Trustpilot), and social profiles. Keep all of these consistently updated and accurate. Inconsistent information across sources creates confusion for AI models and can lead to neutral or inaccurate mentions.

Schema.org Product markup is one of the most direct ways to give AI models structured, unambiguous information about your product.

4. Generate positive, specific reviews on third-party platforms

Review platforms are heavily indexed by AI models. Detailed reviews that mention specific use cases, feature names, and outcomes give AI models the language to describe your product accurately and positively. Encourage customers to leave specific, use-case-focused reviews on platforms like G2 and Capterra rather than generic star ratings.

5. Track, respond to, and build on your sentiment trends

If your AI SOV data shows consistently neutral or negative sentiment around a specific feature or claim, that's a signal to address, either by improving the product, creating better content around that area, or generating more positive third-party coverage that counters the framing.

Common Mistakes Brands Make When Tracking AI Visibility

-

Tracking only one AI platform. ChatGPT dominates consumer queries, but Google AI Overviews reaches billions of existing Google users. Perplexity skews heavily towards researchers and technical buyers. Each platform requires separate tracking.

-

Confusing impressions with mentions. A page that appears in an AI response citation is different from a brand that is actively recommended in the response text. These are different signals. Make sure your methodology distinguishes between them.

-

Measuring in isolation. AI SOV metrics only become useful when compared against competitors and tracked over time. A one-time measurement tells you very little.

-

Ignoring adjacent queries. Brands often focus on their primary category queries and miss the top-of-funnel and adjacent queries where buying decisions begin. Map your full customer journey and build queries for each stage.

-

Not accounting for personalisation. AI responses can vary based on the user's location, browsing history, and previous queries. Systematic measurement requires controlling for these variables by running queries in clean sessions and across multiple geographies.

Frequently Asked Questions

How is AI share of voice different from Google Search Console impressions?

Google Search Console measures how often your pages appear in traditional search results. AI SOV measures how often your brand is mentioned within AI-generated answers, a fundamentally different type of visibility. A brand can have high GSC impressions and very low AI SOV, and vice versa.

Can small brands compete with large brands in AI SOV?

Yes. AI models do not simply favour the largest brands. They favour the brands with the best-documented, most authoritative, and most frequently cited information about specific use cases. A specialist brand with deep content authority in a niche can significantly outperform a large generalist brand in AI responses for that niche.

How often do AI models update, and how does that affect my SOV?

Major AI models are updated on varying schedules, some monthly, some quarterly. Each update can shift the training data and therefore shift your AI SOV. This is why continuous tracking matters: an AI model update can be equivalent to a major Google algorithm update for your brand's visibility.

Is it possible to directly influence what AI says about my brand?

Not directly. You cannot submit content to an AI model the way you submit a sitemap to Google. However, you can influence AI outputs indirectly by ensuring the web sources that AI models draw from contain accurate, positive, and comprehensive information about your brand. This is the core practice of Answer Engine Optimisation (AEO). Our AEO for financial services guide shows how this works in a regulated industry context.

What's a good benchmark for AI SOV in my category?

Benchmarks vary significantly by category. In competitive software categories, the top brand might hold 40-60% AI SOV. In emerging or less-discussed categories, even a 20% mention rate represents strong authority. The most useful benchmark is always your own trend over time compared to specific competitors, rather than an industry average.

The Bottom Line

AI search has created a new battleground for brand visibility. Share of voice in AI-generated responses is not a future concern. It is already influencing purchase decisions, driving organic traffic, and reshaping which brands buyers know and trust.

Brands that start measuring their AI SOV now will build a structural advantage over the next 12-24 months. Brands that wait until AI search is fully dominant will be trying to recover lost ground on a battlefield that has already been decided.

The methodology is not complicated. Define your queries. Run them systematically. Measure your mention rate, share, sentiment, coverage breadth, and platform distribution. Build a baseline. Track changes. Act on the gaps.

Want to see your brand's current AI share of voice? Run a free audit at tryreadable.ai and see which AI platforms mention your brand, what they say, and how you compare to competitors in under 5 minutes.

Continue in Docs.