How to Track Brand Mentions in AI Search Results

The rules of brand visibility changed the moment AI-generated answers became the default interface for millions of search queries. Today, when someone asks ChatGPT "what's the best project management tool for remote teams?" or queries Perplexity for "top email marketing platforms," the answer they receive is not a list of ten blue links. It is a synthesized paragraph—sometimes two—that names a handful of brands and ignores everyone else.

If your brand is not in that paragraph, you effectively do not exist for that query.

This is not a hypothetical future problem. According to data from SparkToro and Datos, zero-click searches now account for more than 58% of all Google searches in the US. Add the rapid adoption of AI Overviews, ChatGPT search, Perplexity, and Microsoft Copilot, and the share of queries that never reach a traditional results page is accelerating fast.

The problem for most marketing teams is that they have no systematic way to know whether they are being mentioned in these AI-generated answers. Traditional rank trackers monitor positions 1 through 10. They were not built for a world where position zero is a generated paragraph that may or may not include your name.

This guide gives you a practical, repeatable framework for tracking brand mentions in AI search results—without needing an enterprise budget or a data science team.

What You'll Learn

- Why AI search mentions are fundamentally different from traditional keyword rankings

- Which AI search surfaces you need to monitor right now

- A step-by-step process for auditing your current AI visibility

- The tools and manual methods you can use today

- Common mistakes that cause brands to disappear from AI answers

- Three concrete tasks you can complete this week

Table of Contents

- Why AI Search Mentions Are Different

- The AI Search Surfaces You Need to Monitor

- Step-by-Step Framework for Tracking Brand Mentions

- Tools for AI Brand Mention Tracking

- How to Interpret What You Find

- Common Mistakes That Kill AI Visibility

- What to Do This Week

- FAQ

- Sources

Why AI Search Mentions Are Different

Traditional SEO gave marketers a relatively legible signal: your page ranked at position 4 for a given keyword. You could track that number over time, compare it to competitors, and tie it to traffic. The feedback loop, while imperfect, was measurable.

AI search breaks that model in three important ways.

1. There is no position—there is inclusion or exclusion.

When Perplexity answers "what CRM should a 10-person startup use?", it does not rank HubSpot at position 1 and Pipedrive at position 2. It either mentions them in the generated answer or it does not. The binary nature of this inclusion makes traditional rank-tracking metrics meaningless.

2. The same query can produce different answers.

Large language models are probabilistic. Ask ChatGPT the same question twice and you may get two different brand mentions. This means a single snapshot is not enough—you need repeated sampling across time and query variations to understand your true visibility rate.

3. The sources that influence AI answers are not always the sources that rank in traditional search.

Research from Ahrefs on Google AI Overviews found that the pages cited in AI-generated answers frequently differ from the pages that rank in the top 10 organic results for the same query. This means your SEO performance and your AI visibility can diverge significantly—and often do.

Understanding these differences is the prerequisite for building a tracking system that actually works.

The AI Search Surfaces You Need to Monitor

Before you can track mentions, you need to know where to look. As of early 2025, the primary AI search surfaces that matter for brand visibility are:

Google AI Overviews (formerly SGE)

Google's AI Overviews appear at the top of search results for a growing percentage of queries. Google has confirmed that AI Overviews now reach over a billion users. For most B2B and B2C brands, this is the highest-volume surface to monitor.

ChatGPT Search

OpenAI's search integration, powered by Bing, allows ChatGPT to retrieve and synthesize real-time web content. With ChatGPT's user base exceeding 300 million weekly active users, brand mentions in ChatGPT answers carry significant reach.

Perplexity AI

Perplexity has positioned itself as a research-first AI search engine and has attracted a highly engaged, often professional audience. Its citation model makes it particularly important for B2B brands—Perplexity explicitly shows which sources it drew from, making it easier to reverse-engineer why certain brands appear.

Microsoft Copilot (Bing AI)

Copilot is integrated into Windows, Edge, and Microsoft 365, giving it a massive passive distribution channel. For brands targeting enterprise buyers, Copilot visibility is increasingly important.

Claude and Gemini

While Anthropic's Claude and Google's Gemini are primarily used as standalone assistants rather than search engines, their adoption in enterprise workflows means they are increasingly consulted for vendor recommendations and category research.

Step-by-Step Framework for Tracking Brand Mentions

Here is the framework we recommend at TryReadable for systematically monitoring AI brand mentions. You can run a quick analysis of your current AI visibility here before working through these steps.

Step 1: Build Your Query Universe

The first step is identifying the queries where you want to appear. These fall into three categories:

Category queries — broad questions about your product category. Example: "best email marketing software for ecommerce"

Problem queries — questions about the problem your product solves. Example: "how do I improve email open rates"

Comparison queries — questions that compare vendors in your space. Example: "Mailchimp vs Klaviyo vs ActiveCampaign"

For most brands, a starting query universe of 30–50 queries is sufficient. Prioritize queries with clear commercial intent—these are the ones where AI answers are most likely to influence purchase decisions.

Practical tip: Use your existing keyword research as a starting point, but reframe keywords as natural-language questions. AI search engines are optimized for conversational queries, not keyword strings.

Step 2: Run Baseline Queries Across Surfaces

With your query list in hand, run each query manually across the surfaces that matter most for your audience. For each query, record:

- Whether your brand is mentioned (yes/no)

- The context of the mention (positive, neutral, negative, or comparative)

- Which competitors are mentioned

- Whether your brand is cited as a source

- The date and time of the query

This is tedious but essential. The goal of this baseline is to establish your current mention rate—the percentage of relevant queries where your brand appears in the AI-generated answer.

Use a simple spreadsheet to start. Here is a template structure:

| Query | Surface | Brand Mentioned | Competitor Mentioned | Context | Date |

|---|---|---|---|---|---|

| best CRM for startups | ChatGPT | No | HubSpot, Pipedrive | — | 2025-01-15 |

| best CRM for startups | Perplexity | Yes | HubSpot, Salesforce | Positive | 2025-01-15 |

| CRM software comparison | Google AI Overview | No | Salesforce, Zoho | — | 2025-01-15 |

Step 3: Sample Repeatedly

Because AI answers are probabilistic, a single query run gives you a noisy signal. For your highest-priority queries, run each query at least five times across different sessions (clear your browser history or use incognito mode between runs) and calculate a mention rate.

A mention rate of 80% means your brand appeared in 4 out of 5 runs of that query. A mention rate of 20% means you appeared once. This gives you a much more reliable signal than a single yes/no observation.

Step 4: Analyze the Gap

Once you have baseline mention rates, compare them to your competitors. For each query, note which brands consistently appear and which do not. This gap analysis tells you two things:

- Which queries represent your biggest visibility opportunities — high-intent queries where competitors appear but you do not

- What content or authority signals your competitors have that you lack — the inputs that are causing AI models to favor them

For a deeper look at how your brand is currently performing across AI search surfaces, explore our recent AI visibility reports.

Step 5: Identify the Source Content

For surfaces like Perplexity that show citations, click through to the sources being cited when your competitors are mentioned. Ask: what type of content is being cited? Common patterns include:

- Long-form comparison articles on third-party review sites (G2, Capterra, TrustRadius)

- Category pages on high-authority publications (Forbes, TechCrunch, industry blogs)

- The brand's own documentation, case studies, or feature pages

- Reddit threads and community discussions

Research from BrightEdge has found that AI Overviews disproportionately cite content that is comprehensive, well-structured, and written at an appropriate reading level for the query audience. This is directly relevant to how you optimize your content to appear in AI answers.

Step 6: Set Up Ongoing Monitoring

Manual sampling is valuable for establishing a baseline, but you need a system for ongoing monitoring. Options include:

Manual cadence: Assign a team member to run your top 20 queries across your priority surfaces once per week. Log results in your tracking spreadsheet. This takes about 90 minutes per week and is free.

Semi-automated: Use tools like TryReadable's AI visibility analyzer to automate query sampling and mention detection across surfaces.

API-based: For teams with engineering resources, the Perplexity API and OpenAI API allow you to programmatically query these surfaces and parse responses for brand mentions. This enables higher-frequency sampling at scale.

Tools for AI Brand Mention Tracking

The tooling landscape for AI search monitoring is still maturing, but here are the most useful options available today:

TryReadable

TryReadable is purpose-built for AI visibility monitoring. It tracks how your brand appears across AI search surfaces, scores your content for AI readability, and surfaces the specific changes that would improve your mention rate. Book a demo to see how it works for your brand.

Perplexity API

Perplexity's API allows you to submit queries programmatically and receive structured responses. Because Perplexity includes citations, you can parse responses to identify both brand mentions and the sources being cited. Perplexity's API documentation is publicly available.

OpenAI API

The OpenAI API with web search enabled allows you to query ChatGPT search programmatically. You can build a simple script that submits your query list, parses responses for brand mentions, and logs results to a spreadsheet. OpenAI's API documentation covers the web search integration.

Google Search Console

While not an AI-specific tool, Google Search Console is essential for understanding which of your pages are being indexed and potentially cited in AI Overviews. Pages that are not indexed cannot appear in AI Overviews. Google Search Console is free.

Mention and Brand24

Traditional brand monitoring tools like Mention and Brand24 are beginning to add AI search monitoring features. They are most useful for tracking when your brand is mentioned in the web content that AI models draw from—an indirect but important signal.

How to Interpret What You Find

Raw mention data is only useful if you know how to act on it. Here is how to interpret the most common patterns:

Pattern 1: High mention rate on Perplexity, low on Google AI Overviews

This typically means your brand has good coverage on the types of sources Perplexity favors (direct citations, documentation, community content) but lacks the authority signals Google weights more heavily (domain authority, structured data, E-E-A-T signals).

Action: Audit your on-site content for structured data markup and ensure your most important pages demonstrate clear expertise, authoritativeness, and trustworthiness signals.

Pattern 2: Mentioned in comparisons but not in category queries

This means AI models know your brand exists and understand its category, but do not consider it a default recommendation for broad category queries. You are in the consideration set but not the recommendation set.

Action: Focus on building content that establishes category leadership—original research, comprehensive guides, and third-party validation from high-authority sources.

Pattern 3: Mentioned but in a negative or limiting context

AI models sometimes mention brands with qualifiers: "X is a good option if you're on a budget" or "X works well for small teams but may not scale." These contextual mentions can actually hurt conversion if the qualifier does not match your positioning.

Action: Audit the sources being cited when these qualified mentions appear. The qualifier language often comes directly from review site content or comparison articles. Engage with those sources to ensure accurate positioning.

Visibility Rate Benchmark Data

The following table shows approximate AI mention rates by brand tier, based on aggregated data from TryReadable's analysis of B2B SaaS brands across category queries. Use this as a rough benchmark for interpreting your own results.

| Brand Tier | Avg. Mention Rate (Category Queries) | Avg. Mention Rate (Comparison Queries) |

|---|---|---|

| Category leader (top 3 by market share) | 72–85% | 88–95% |

| Established challenger (top 10) | 35–55% | 60–75% |

| Growing brand (Series A–B) | 12–28% | 25–45% |

| Early-stage / niche brand | 2–10% | 8–20% |

Note: These ranges are illustrative benchmarks based on TryReadable's internal analysis and should be treated as directional, not definitive.

Example of an AI visibility dashboard tracking brand mention rates across surfaces over time.

Common Mistakes That Kill AI Visibility

After analyzing hundreds of brands' AI visibility, we see the same mistakes repeatedly. Here are the most damaging ones:

Mistake 1: Optimizing for keywords instead of questions

AI models are trained on natural language. Content that is written as a series of keyword-stuffed headers does not read naturally and is less likely to be cited in conversational AI answers. Research from Semrush found that AI Overviews favor content that directly answers questions in clear, complete sentences.

Fix: Rewrite your most important pages to answer specific questions directly. Use question-based H2s and H3s. Write the answer in the first sentence after the heading.

Mistake 2: Ignoring third-party content

AI models do not only draw from your own website. They draw from the entire web—and for brand mentions specifically, third-party sources like G2, Capterra, Reddit, and industry publications often carry more weight than your own marketing pages.

Fix: Actively manage your presence on third-party review and comparison sites. Ensure your G2 and Capterra profiles are complete, accurate, and up to date. Engage with industry publications to secure coverage.

Mistake 3: Writing at the wrong reading level

Studies on AI content preferences consistently show that AI models favor content that is clear and accessible—not dumbed down, but not unnecessarily complex either. Content written at a graduate-school reading level for a general audience is less likely to be cited than content written at a clear, professional level.

This is core to what TryReadable measures. You can check your content's readability score and AI-friendliness here.

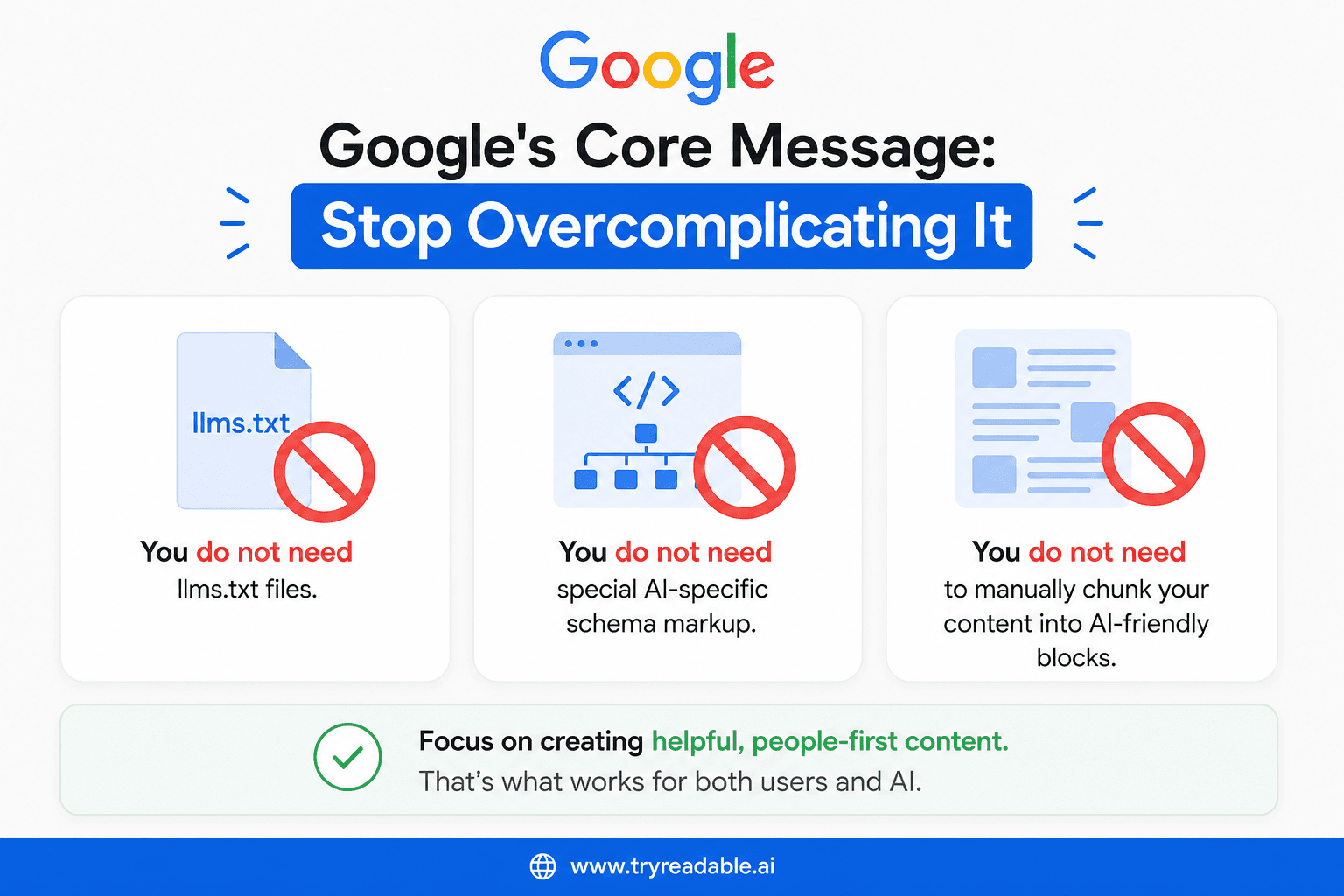

Mistake 4: Neglecting structured data

Google's AI Overviews use structured data (schema markup) to understand what a page is about and whether it is authoritative for a given query. Brands that have not implemented basic schema markup—especially for their product pages, FAQ pages, and how-to content—are at a systematic disadvantage.

Fix: Implement FAQ schema on pages that answer common questions. Use Product schema on product pages. Use HowTo schema on tutorial content. Google's structured data documentation is the authoritative reference.

Mistake 5: Treating AI visibility as a one-time audit

AI models are updated continuously. The sources they draw from change. The queries users ask evolve. A brand that appears prominently in AI answers today may not appear next quarter if it does not maintain its content and authority signals.

Fix: Build AI visibility monitoring into your regular marketing cadence. Monthly reviews of your top 20 queries is a minimum viable commitment.

Mistake 6: Ignoring content freshness

AI models, particularly those with web access like Perplexity and ChatGPT Search, favor recent content. A comprehensive guide published in 2021 may be outcompeted by a less thorough guide published in 2024 simply because it is more recent.

Fix: Audit your most important pages for freshness. Add a "last updated" date. Refresh statistics, examples, and recommendations annually at minimum.

What to Do This Week

You do not need to implement everything in this guide at once. Here are three high-impact tasks you can complete in the next five business days:

Task 1: Run a 20-query baseline audit (2–3 hours)

Select 20 queries that represent your most important category, problem, and comparison searches. Run each query on ChatGPT, Perplexity, and Google (to check for AI Overviews). Log your results in a spreadsheet using the template structure from Step 2 above. This gives you your baseline mention rate and identifies your biggest gaps immediately.

Task 2: Audit your top 5 pages for AI readability (1 hour)

Take the five pages on your site most likely to be cited in AI answers—typically your homepage, your main product page, and your two or three most-trafficked blog posts. Run each through TryReadable's analyzer and note the readability score and any specific recommendations. Prioritize the changes that can be made without a full rewrite.

Task 3: Check your third-party profiles (30 minutes)

Log into your G2, Capterra, and TrustRadius profiles (or create them if they do not exist). Ensure your product description, category tags, and feature list are accurate and complete. These profiles are frequently cited in AI answers for comparison queries and are often neglected by marketing teams.

FAQ

How often do AI search results change?

AI search results can change daily, especially for surfaces with real-time web access like Perplexity and ChatGPT Search. Google AI Overviews tend to be more stable but still update as Google's index and AI models are refreshed. For practical monitoring purposes, weekly sampling of your priority queries is sufficient for most brands.

Can I pay to appear in AI search results?

Not directly. None of the major AI search surfaces currently offer paid placement within AI-generated answers. However, paid advertising can influence the web content that AI models draw from—for example, sponsored content on high-authority publications may be indexed and cited. The primary lever for AI visibility is organic content quality and authority.

Does my website's domain authority affect AI visibility?

Yes, but it is not the only factor. Domain authority influences whether your own pages are cited as sources in AI answers. However, AI models also draw from third-party sources that mention your brand—and those sources may have higher domain authority than your own site. A brand with a lower-authority domain can still achieve strong AI visibility through coverage on high-authority third-party sites.

What is the relationship between traditional SEO and AI visibility?

They are related but not identical. Strong traditional SEO—good content, clean technical implementation, quality backlinks—creates a foundation for AI visibility. But AI models weight some factors differently than traditional search algorithms. Content clarity, question-answering structure, and third-party mentions are particularly important for AI visibility in ways that traditional SEO metrics do not fully capture.

How do I know if an AI model is citing my content?

For Perplexity, citations are shown explicitly in the interface. For Google AI Overviews, cited sources appear as expandable chips below the generated answer. For ChatGPT Search, sources are listed at the bottom of responses. For non-search AI models like Claude and Gemini, there is no direct citation mechanism—you can only infer influence from the content of the response.

Should I create content specifically for AI search?

Yes, but not at the expense of human readability. The best approach is to create content that is genuinely useful, clearly written, and well-structured—this serves both human readers and AI models. Tactics that optimize purely for AI at the expense of human experience (such as adding excessive FAQ sections or unnatural question-answer formatting) tend to underperform over time.

How does TryReadable help with AI visibility tracking?

TryReadable combines content readability analysis with AI visibility monitoring. It analyzes your existing content for the signals that influence AI citation—reading level, structure, question-answering clarity, and freshness—and tracks your brand mention rates across AI search surfaces over time. You can explore our guides for more detail on how the platform works, or book a demo to see it applied to your specific brand.

Sources

- SparkToro & Datos — Zero-click search data

- Google Blog — AI Overviews announcement and reach

- OpenAI — ChatGPT growth and user statistics

- Ahrefs — Google AI Overviews SEO research

- BrightEdge — AI Overviews content analysis

- Semrush — AI Overviews content preferences

- Nielsen Norman Group — AI-generated content and readability

- Google Developers — Structured data documentation

- Perplexity AI — API documentation

- OpenAI — API and web search documentation

Start Tracking Your AI Visibility Today

The brands that will win in AI search are not necessarily the ones with the biggest budgets or the most backlinks. They are the ones that understand how AI models discover, evaluate, and cite content—and build their content strategy accordingly.

The framework in this guide gives you everything you need to start. The baseline audit takes a few hours. The ongoing monitoring takes less than two hours per week. And the payoff—appearing in the AI-generated answers that are increasingly the first and last thing your potential customers read—is compounding.

Ready to see where your brand stands right now?

Analyze your AI visibility for free →

Or if you want a guided walkthrough of how TryReadable can automate this process for your team:

You can also explore our complete library of AI visibility guides and recent AI visibility reports to benchmark your performance against brands in your category.

Continue in Docs.