Table of contents

- What Google Actually Means by AEO, GEO, and AI Search

- Google's Core Message: Stop Overcomplicating It

- The Foundational SEO Practices That Still Drive AI Search Results

- People-First Content: The Real Signal Google's AI Looks For

- What Google Says You Can Safely Ignore (Myth-Busting)

- How to Prepare for Google's Agentic AI Experiences

- Your Practical Action Checklist: What to Focus on Right Now

- The Bottom Line

If you have spent any time in marketing circles over the past year, you have heard the terms AEO, GEO, and AI Search thrown around like they represent a completely new discipline that requires a completely new strategy. Agencies are pitching AI content audits. Consultants are selling "AI-readiness" packages. Founders are losing sleep wondering whether their entire content library is about to become invisible.

Here is the good news: Google has published an official guide to optimizing for generative AI features in Google Search, and the core message is far more reassuring than the industry noise suggests. Most of what you already do for SEO applies directly to AI search. Most of the new tactics being sold to you are unnecessary. And the path forward is cleaner than you think.

This article walks through Google's actual recommendations in plain language, with real-world examples at every step, so you can make confident decisions about where to spend your time and budget.

What Google Actually Means by AEO, GEO, and AI Search

Before diving into recommendations, it helps to understand what these terms actually describe, because the marketing industry has made them sound more complicated than they are.

AEO stands for Answer Engine Optimization. The idea is that search engines, especially Google, are increasingly functioning as answer engines rather than link directories. Instead of returning ten blue links and letting users click through to find answers, Google now synthesizes information and delivers a direct answer at the top of the results page. AEO is the practice of structuring your content so it gets selected as the source for those answers.

GEO stands for Generative Engine Optimization. This is a newer term that specifically refers to optimizing content for AI-generated responses, like the AI Overviews that now appear at the top of many Google searches. Where traditional SEO focuses on ranking in the list of links, GEO focuses on being cited or summarized within the AI-generated text block above those links.

AI Search is the broader umbrella term for all of this: the shift toward Google using large language models and generative AI to interpret queries, synthesize answers, and increasingly take actions on behalf of users.

Here is a concrete example of how this plays out. Imagine a product manager opens Google and types: "best project management tool for remote teams." In the old Google, they would see a list of links to review sites, blog posts, and vendor pages. In the new Google, they see an AI Overview at the top of the page that reads something like: "For remote teams, tools like Asana, Linear, and Notion are frequently recommended. Asana is noted for its task dependencies and timeline views, while Notion offers flexible wikis alongside project tracking."

That AI Overview was generated by Google's AI reading and synthesizing content from multiple web pages. The pages that got cited were not necessarily the ones with the most backlinks or the highest domain authority. They were the ones with clear, accurate, well-structured information that Google's AI could confidently extract and attribute.

The critical point Google makes in its official guide: AEO and GEO are not separate disciplines. They are, in Google's own framing, still SEO. The signals that determine whether your content appears in an AI Overview are largely the same signals that determine whether your content ranks in traditional search. This is not a new game. It is the same game with a new scoreboard.

Google released its dedicated AI optimization guide because the volume of misinformation and unnecessary complexity being sold to site owners had reached a point where official clarification was needed. The guide is essentially Google saying: slow down, most of what you are hearing is wrong, here is what actually matters.

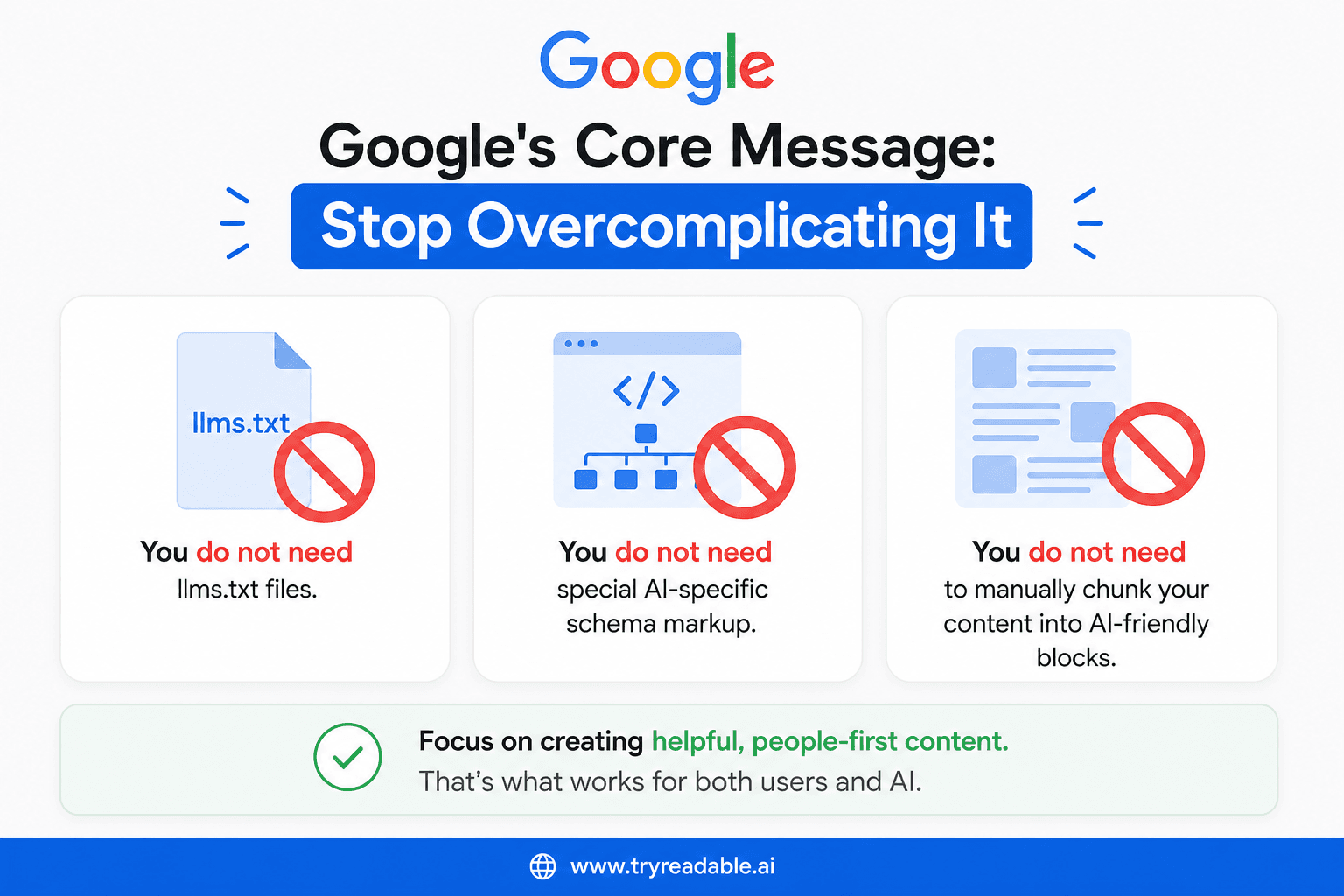

Google's Core Message: Stop Overcomplicating It

The single most important thing to understand about Google's AI search guidance is that it is, at its heart, a message of simplification. Google is not asking you to rebuild your content strategy. It is asking you to do the fundamentals well.

Search Engine Journal's coverage of the guide summarized the core position clearly: Google explicitly calls AEO and GEO "still SEO." There is no separate optimization track for AI features. The same crawlability, quality, and relevance signals that have always mattered continue to matter.

More specifically, Google's guide debunks several tactics that have been aggressively marketed as necessary for AI search visibility.

You do not need llms.txt files. This is a file format that some in the industry proposed as a way to give AI systems structured instructions about your site, similar to how robots.txt works for crawlers. Google has confirmed it does not use llms.txt files to decide what content to include in AI features. Creating one is not harmful, but it provides no benefit for Google AI Overviews.

You do not need special AI-specific schema markup. Standard structured data, the kind you may already have implemented for rich results, is sufficient. There is no new schema type required specifically for AI features.

You do not need to manually chunk your content into AI-friendly blocks. This is perhaps the most widely sold unnecessary tactic. The idea is that you should restructure your pages into short, discrete question-and-answer blocks so AI systems can more easily extract information. Google says this is not a ranking factor for AI features.

Consider a real scenario: a SaaS founder reads an article in early 2024 claiming that AI search requires content to be broken into 150-word chunks with explicit question headers. They spend three weeks having their team reformat 200 blog posts. The result? No measurable change in AI Overview appearances, because Google's systems are sophisticated enough to extract relevant information from well-written prose without needing it pre-chunked.

Or consider a marketer who reads that AI crawlers need separate permissions in robots.txt. They add a new rule specifically blocking or allowing certain AI bots. Google's guidance makes clear that if your standard robots.txt is properly configured for Googlebot, you are already covered. Adding AI-specific rules is unnecessary overhead.

The foundational message is this: if your SEO is solid, you are already optimizing for AI search. The work is not to add new layers on top of your existing strategy. The work is to make sure the existing strategy is executed well.

The Foundational SEO Practices That Still Drive AI Search Results

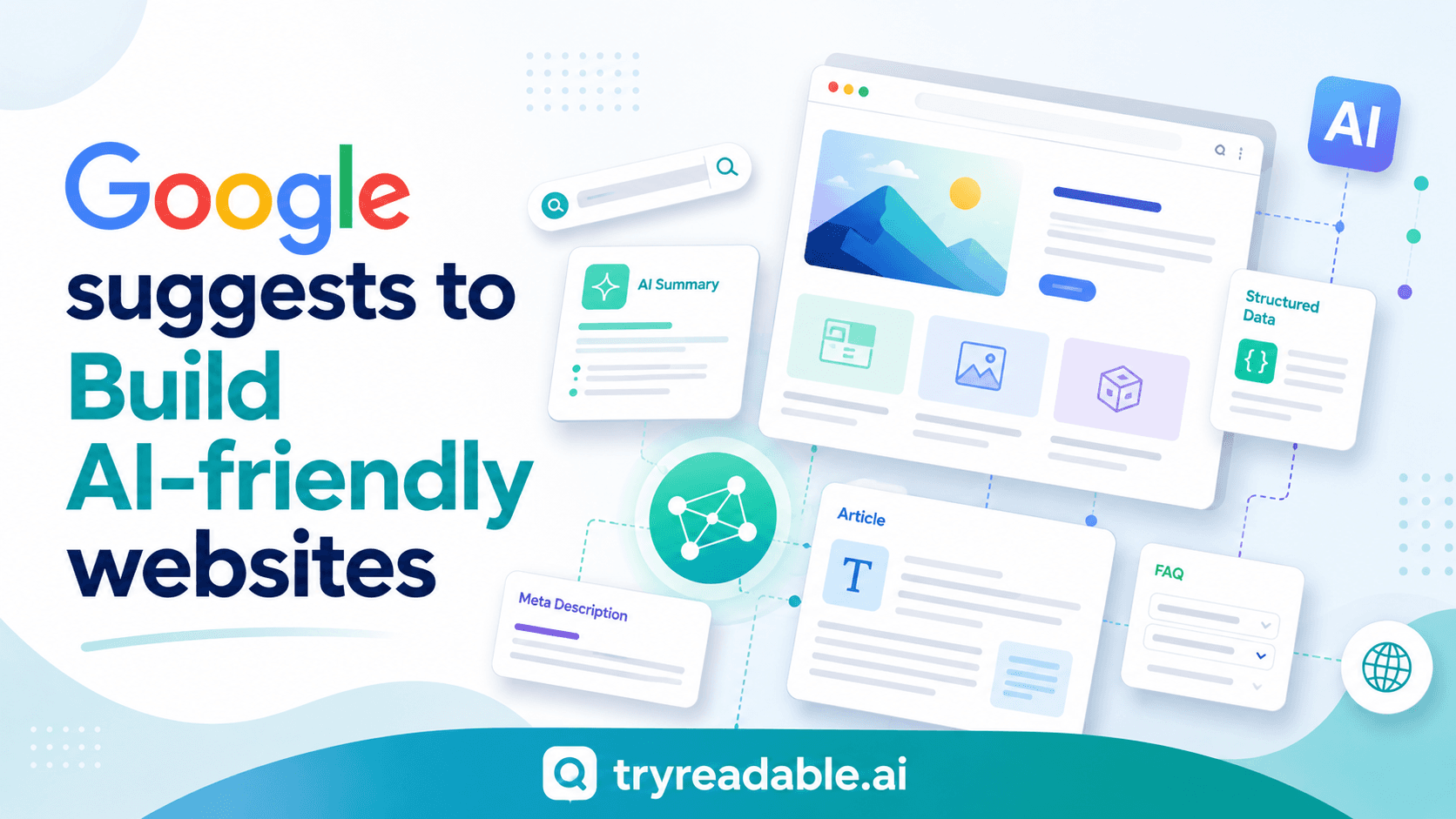

Google's AI optimization guide is explicit that the technical foundations of SEO are the same foundations required for AI search visibility. Let us walk through the most important ones with concrete examples.

Crawlability and Indexation

Google's AI features can only surface content that Google has been able to crawl and index. This sounds obvious, but crawlability errors are surprisingly common and often go unnoticed for months.

If a page is blocked in your robots.txt file, Google cannot read it. If it cannot read it, it cannot include it in an AI Overview. Full stop.

Here is a scenario that plays out more often than you would expect: a fintech startup has a robust blog covering topics like expense management, payroll compliance, and financial forecasting. Their engineering team, during a site migration, accidentally adds a rule to robots.txt that blocks the entire /blog directory. The pages remain live and accessible to human visitors. But Googlebot stops crawling them. Within weeks, those pages drop out of the index. They stop appearing in traditional search results. And they stop being cited in AI Overviews.

The fix is straightforward once you catch it, but the damage accumulates silently. This is why Google's guide emphasizes checking your Google Search Console coverage report regularly. The Coverage report shows you which pages are indexed, which are excluded, and why.

Beyond robots.txt, pages need to actually load and render correctly. Google's systems need to be able to read the content on the page. If your site relies heavily on JavaScript to render content, and that JavaScript fails or takes too long to execute, Google may see a blank or incomplete page. The Google Search Central documentation on JavaScript SEO covers this in detail, but the practical takeaway is: test your pages with JavaScript disabled and confirm the core content is still visible in the HTML.

Structured Data

Structured data, implemented using Schema.org markup, helps Google understand what your content is about and how different pieces of information relate to each other. This remains valuable for AI features.

A recipe website is a clean example. If a recipe page includes structured data marking up the cooking time, ingredient list, calorie count, and serving size, Google's AI can extract those specific data points and surface them directly in an AI Overview. A user searching "how long does it take to make beef bourguignon" might see the answer pulled directly from a structured data field, with the source site credited.

Without structured data, Google can still extract this information from the page text, but structured data makes the extraction more reliable and more precise. For product pages, service pages, FAQ sections, and how-to guides, implementing the relevant schema types from Google's structured data documentation is time well spent.

Clear Page Titles and Descriptive Metadata

Page titles and meta descriptions are not just for traditional search results. They help Google's AI understand the topic and scope of a page before it reads the full content. A page titled "Q3 Report" tells Google almost nothing. A page titled "Q3 2024 SaaS Churn Rate Benchmarks: Analysis of 500 Companies" tells Google exactly what the page covers, who it is for, and what kind of data it contains.

This clarity directly influences whether Google's AI selects your page as a source for a relevant query.

People-First Content: The Real Signal Google's AI Looks For

Technical SEO gets your pages into the game. Content quality determines whether they win. Google's helpful content guidelines are the clearest statement of what Google's AI features are actually looking for.

The framework Google uses is called E-E-A-T: Experience, Expertise, Authoritativeness, and Trustworthiness. These four signals are how Google evaluates whether a piece of content is genuinely useful or whether it was produced primarily to rank.

Experience refers to first-hand knowledge. Did the person who wrote this content actually do the thing they are writing about? A post about email marketing written by someone who has run email campaigns, tested subject lines, analyzed open rates, and iterated based on results signals experience. A post that summarizes advice from other posts does not.

The contrast is stark when you look at examples. Consider two articles both titled "10 Tips for Email Marketing":

Article A is a generic listicle. Each tip is a sentence or two. The advice is accurate but entirely surface-level: "Write compelling subject lines." "Segment your list." "Test your send times." There is no data, no specific context, no evidence that the author has ever actually sent an email campaign.

Article B is written by a founder who shares the actual results of a 90-day email experiment. They include the specific subject lines they tested, the open rates for each, the segments they created, and what they changed based on the data. They include a screenshot of their Mailchimp dashboard showing the before and after. They explain what did not work and why.

Google's AI is significantly more likely to cite Article B in an AI Overview about email marketing. Not because it is longer or because it has more keywords, but because it demonstrates genuine experience and provides original value that cannot be found elsewhere.

Expertise means the content reflects deep knowledge of the subject. This does not always require formal credentials. A self-taught developer writing about a specific coding problem they solved can demonstrate expertise through the depth and accuracy of their explanation. But expertise needs to be evident in the content itself, not just claimed in an author bio.

Authoritativeness is built over time through consistent, accurate publishing and through recognition from other credible sources. When other authoritative sites link to your content, it signals to Google that your site is a reliable source on that topic.

Trustworthiness encompasses accuracy, transparency, and honesty. Pages that cite their sources, disclose conflicts of interest, provide accurate information, and are transparent about who wrote the content and why score higher on trustworthiness signals.

Another example: A cybersecurity company publishing a detailed post-mortem of a real security incident they experienced is a strong example of all four signals working together. The content demonstrates experience (they lived through it), expertise (they understand the technical details), authoritativeness (other security professionals will reference it), and trustworthiness (they are being transparent about a failure). That kind of content gets cited in AI Overviews because it is genuinely unique and valuable.

Google's helpful content guide includes a set of self-assessment questions that are worth applying to every piece of content you publish:

- Does this content provide original information, reporting, research, or analysis?

- Does it provide a substantial, complete, or comprehensive description of the topic?

- Does it provide insightful analysis or interesting information beyond the obvious?

- After reading this content, would someone feel they learned enough to achieve their goal, or would they feel the need to search again?

- Is this content written by an expert or enthusiast who demonstrably knows the topic well?

If you answer "no" to several of these questions, the content is unlikely to be selected for AI Overviews regardless of how well it is technically optimized. You can use TryReadable's content analysis tool to evaluate your existing content against readability and quality signals before publishing.

The last point in Google's guidance on this topic is worth emphasizing: avoid search-engine-first content. Thin articles written primarily to capture keyword traffic, with no genuine effort to help the reader, are actively deprioritized in AI features. Google's systems have become increasingly good at distinguishing between content written for people and content written for algorithms.

What Google Says You Can Safely Ignore (Myth-Busting)

One of the most valuable things about Google's AI optimization guide is what it tells you to stop doing. In an industry where new tactics are constantly being sold as essential, having Google explicitly say "this is not necessary" is genuinely useful.

Here is a clear list of what Google has officially said you do not need for AI search visibility:

llms.txt files. This file format was proposed as a way to give AI language models structured guidance about your site, similar to robots.txt. Some SEO tools and agencies began recommending it as a must-have for AI search. Google's guide is explicit: it does not use llms.txt files to determine what content to include in AI features. You can create one if you want to communicate with other AI systems, but it has no effect on Google AI Overviews.

Special AI-optimized schema markup. Beyond the standard structured data types that have always been recommended for rich results (FAQ, HowTo, Product, Article, etc.), there is no new schema specifically for AI features. If you have already implemented relevant structured data correctly, you are done. If you have not, implement the standard types. Do not pay for custom "AI schema" packages.

Manual content chunking. This is the practice of breaking your content into short, discrete blocks, often formatted as explicit question-and-answer pairs, on the theory that AI systems prefer pre-chunked content. Google's guide says this is not a ranking factor. Google's AI is capable of extracting relevant information from well-written, naturally structured content. Reformatting your entire content library into Q&A blocks provides no benefit and often makes the content less readable for human visitors.

To make this concrete: imagine an agency pitching a client on a "content restructuring for AI readiness" project. The proposal involves reformatting 300 existing blog posts into explicit Q&A format, adding AI-specific metadata, and creating a new llms.txt file. The quoted price is significant. According to Google's own guidance, none of these three deliverables would improve the client's visibility in AI Overviews. The budget would be better spent creating new, genuinely helpful content or improving the technical health of the site.

Separate sitemaps or crawl instructions for AI bots. If your standard XML sitemap is up to date and your robots.txt is correctly configured for Googlebot, you do not need separate versions for AI crawlers. Google's AI features use the same crawl infrastructure as traditional search. Managing one well-maintained sitemap is sufficient.

Blocking or allowing specific AI crawlers in robots.txt. Unless you have a specific reason to block a particular AI crawler (for example, if you do not want your content used to train AI models), you do not need to add AI-specific rules to your robots.txt. Google's AI Overviews use Googlebot, which your existing robots.txt already handles.

The pattern here is consistent: the tactics being sold as AI-specific are either unnecessary, ineffective, or already covered by standard SEO practice. If you are working with an agency or consultant who is recommending any of the above as essential for AI search, ask them to point you to Google's official documentation supporting that claim. You will find that the documentation says the opposite.

How to Prepare for Google's Agentic AI Experiences

While the current guidance is largely reassuring, Google's AI optimization guide does introduce one genuinely new concept that founders and marketers should understand: agentic AI experiences.

Google is building AI systems that can take multi-step actions on behalf of users, not just answer questions. These are called "agents," and they represent a meaningful shift in how users might interact with search.

Here is what this looks like in practice. Instead of a user searching "cheapest CRM for a 10-person team" and reading through review articles, they might ask Google's AI agent: "Find and compare the three cheapest CRMs for a 10-person team, and tell me which one has the best free trial." The agent would then browse multiple websites, extract pricing information, compare features, read trial terms, and return a synthesized recommendation, all without the user visiting a single page.

For this kind of interaction to work, the agent needs to be able to navigate your site, find the relevant information, and extract it reliably. This creates some specific considerations:

Fast load times matter more. An agent browsing your site programmatically has less patience for slow pages than a human user. If your pricing page takes eight seconds to load, an agent may time out or move on to a competitor's site. Google's Core Web Vitals remain the relevant benchmark here.

Clear navigation and site structure become critical. An agent needs to be able to find your pricing page, your features page, and your comparison content without getting lost in a confusing site architecture. If your navigation is unclear or your key pages are buried, agents may not find them.

Functional forms and interactive elements need to work correctly. If an agent is trying to check availability or get a quote, and your contact form is broken or your pricing calculator does not function, the agent cannot complete the task.

Structured product and service data becomes more important. When an agent is comparing CRMs, it needs to extract specific data points: price per user, number of contacts included, features available at each tier, free trial duration. If this information is clearly structured on your page, either through schema markup or through consistent, scannable formatting, agents can extract it reliably. If it is buried in paragraphs of marketing copy, extraction becomes unreliable.

Consider a B2B software company with a pricing page that lists three tiers. If the page clearly shows the price per user per month, the key features at each tier, and the trial terms in a structured table with schema markup, an AI agent comparing CRM options can extract that information accurately. If the pricing page instead says "contact us for pricing" with no further detail, the agent has nothing to work with and will move on to a competitor who publishes clear pricing.

It is important to note that Google acknowledges in its guide that agentic AI experiences are still early-stage. Standards are still evolving. The specific technical requirements for agentic interactions are not yet fully defined. Google's recommendation for now is to focus on clean, accessible site architecture, which is good advice regardless of how agentic AI develops.

The practical implication for founders and marketers: you do not need to rebuild your site for agentic AI today. But you should make sure your site is fast, navigable, and contains clearly structured information about your products and services. These are improvements that benefit human visitors, traditional search, and AI agents simultaneously.

If you want to see how your current content performs against readability and structure standards, TryReadable's analysis tool can give you a starting point. For a more comprehensive review of your content strategy in light of these changes, book a demo to see how we work with marketing teams.

Your Practical Action Checklist: What to Focus on Right Now

Based directly on Google's official recommendations, here is a prioritized action list for founders and marketers. These are not speculative tactics. They are the specific practices Google has identified as the foundation for visibility in both traditional search and AI features.

1. Audit Your Crawlability

Open Google Search Console and navigate to the Coverage report (now called the Indexing report in newer versions of Search Console). Look for pages that are excluded or have errors. Pay particular attention to:

- Pages blocked by robots.txt that should be accessible

- Pages returning 404 or 500 errors

- Pages marked as "Discovered but not indexed" (which may indicate crawl budget issues or quality signals)

If you find that important pages are not indexed, investigate why before doing anything else. No amount of content optimization matters if Google cannot read the page.

Also fetch your key pages using the URL Inspection tool in Search Console. This shows you exactly what Google sees when it crawls your page, including whether JavaScript is rendering correctly.

2. Publish Content That Demonstrates Real Expertise

Review your existing content library with the E-E-A-T framework in mind. For each major piece of content, ask: does this demonstrate that a real person with real experience wrote it?

Practical improvements include:

- Adding detailed author bios that explain the author's relevant experience. A B2B SaaS blog that adds a byline reading "Written by Sarah Chen, Head of Customer Success at [Company], with 8 years of experience in SaaS onboarding" immediately strengthens the E-E-A-T signal compared to "Written by the [Company] Team."

- Including original data, even if it is from your own customer base or internal research

- Adding case studies with specific, verifiable results

- Citing credible external sources and linking to them

- Including the date the content was last updated, especially for topics that change frequently

For content that is thin or generic, consider whether it is worth updating substantially or consolidating with other similar content. A single comprehensive, expert-level guide on a topic will outperform five thin articles on related subtopics.

3. Ensure Your Site Is Mobile-Friendly and Fast

Google uses mobile-first indexing, meaning it primarily uses the mobile version of your site for indexing and ranking. If your site is not mobile-friendly, you are at a disadvantage in both traditional search and AI features.

Test your site using Google's Mobile-Friendly Test and address any issues flagged. Then check your Core Web Vitals in Search Console. The three metrics to focus on are:

- Largest Contentful Paint (LCP): How long it takes for the main content to load. Target under 2.5 seconds.

- Interaction to Next Paint (INP): How responsive the page is to user interactions. Target under 200 milliseconds.

- Cumulative Layout Shift (CLS): How much the page layout shifts during loading. Target under 0.1.

Poor page experience is a ranking input for AI features, just as it is for traditional search. Improving your Core Web Vitals benefits your entire search presence.

4. Review and Update Your Structured Data

If you have already implemented structured data, audit it for accuracy. Structured data that is outdated or incorrect can actually hurt your credibility with Google's systems. Common issues include:

- Product schema with outdated pricing

- FAQ schema with questions that no longer match the page content

- Article schema with incorrect publication dates

If you have not implemented structured data, prioritize the types most relevant to your content:

- FAQ schema for pages that answer common questions

- HowTo schema for step-by-step guides

- Product schema for product or service pages

- Article schema for blog posts and editorial content

- Organization schema for your homepage and about page

Use Google's Rich Results Test to validate your structured data and confirm it is being read correctly.

5. Create Content That Answers Real Questions Completely

Look at the queries your site currently ranks for in Search Console. For each major topic area, ask: does your content fully answer the question, or does it leave gaps that would send a reader back to Google to search again?

Content that fully satisfies a query is more likely to be cited in an AI Overview than content that partially addresses it. This does not mean writing longer for the sake of length. It means being genuinely comprehensive about the specific question you are answering.

One practical approach: use the "People Also Ask" section in Google search results for your target queries. These are the follow-up questions real users have after their initial search. If your content addresses the main question but not the follow-up questions, you have an opportunity to expand it.

6. Build Your Site's Topical Authority

Google's AI features tend to cite sources that have demonstrated consistent expertise on a topic over time. A site that has published 50 high-quality articles on project management is more likely to be cited for project management queries than a site that has published one.

This does not mean you need to publish constantly. It means being strategic about building depth on the topics most important to your business. Identify the three to five topic areas where you want to be recognized as an authority, and build a content plan that creates genuine depth in those areas.

For more guidance on building a content strategy that aligns with these principles, explore TryReadable's guides or see how we work with brands to improve content quality at scale.

The Bottom Line

Google's guidance on AEO, GEO, and AI search is ultimately a message of continuity. The fundamentals of good SEO, crawlable pages, high-quality content, clear structure, genuine expertise, and fast performance, are the same fundamentals that drive visibility in AI features.

The industry has generated a significant amount of noise around AI search optimization, much of it designed to create urgency and sell new services. Google's official guide cuts through that noise with a clear message: stop overcomplicating it.

You do not need llms.txt files. You do not need AI-specific schema. You do not need to reformat your content into chunks. What you need is a site that Google can crawl, content that genuinely helps people, and a consistent commitment to publishing material that demonstrates real expertise.

The emerging area to watch is agentic AI, where Google's systems will increasingly take actions on behalf of users rather than just answering questions. Preparing for this means ensuring your site is fast, navigable, and contains clearly structured information. These are improvements worth making now, both for the AI future and for the human visitors you are serving today.

If you want to evaluate how your current content measures up against these standards, TryReadable's content analysis tool is a good starting point. And if you are working through a larger content strategy question, book a demo to talk through your specific situation.

The game has not changed as much as the industry wants you to believe. Play it well, and you will be in a strong position regardless of how AI search continues to evolve.

Sources

- Google's Guide to Optimizing for Generative AI Features on Google Search | Google Search Central | Documentation | Google for Developers

- Google's New AI Search Guide Calls AEO And GEO 'Still SEO'

- Google Search Essentials (formerly Webmaster Guidelines) | Google Search Central | Documentation | Google for Developers

- Creating Helpful, Reliable, People-First Content | Google Search Central | Documentation | Google for Developers

- Google Search's Guidance on Generative AI Content on Your Website | Google Search Central | Documentation | Google for Developers

- Persyaratan Teknis Google Penelusuran | Pusat Google Penelusuran | Documentation | Google for Developers

- Gestión del presupuesto de rastreo | Infraestructura de rastreo de Google | Crawling infrastructure | Google for Developers

- JavaScript a SEO – podstawy | Centrum wyszukiwarki Google | Documentation | Google for Developers

- Google Search Central documentation

- Google AI Overviews documentation

- OpenAI announcement archive

- Anthropic documentation

- Schema.org structured data vocabulary

- W3C JSON-LD specification

- Google Analytics developer docs

- NIST AI Risk Management Framework

Continue in Docs.