Over the past few months we’ve been analyzing AI-agent traffic across websites using Readable.

Across roughly 2 million AI-agent requests per month, we expected to see at least some requests for /llms.txt, a proposed standard for helping LLMs understand website content.

But after monitoring traffic across dozens of websites:

We haven’t seen a single request for llms.txt**.**

Not from ChatGPT.

Not from Claude.

Not from Perplexity.

Not from other AI agents.

This surprised us, because llms.txt has been getting a lot of attention in discussions about AI-readable websites.

So we decided to look deeper into the data.

What is llms.txt**?**

llms.txt is a proposed convention for helping LLMs understand how to consume a website.

The idea is similar to robots.txt, which websites use to communicate with search engine crawlers.

A typical llms.txt file might:

- describe the purpose of the website

- list important documentation or pages

- provide structured entry points for AI agents

- guide LLMs toward machine-readable content

Example:

Example llms.txt

site: example.com

description: Documentation for Example API

main-docs: https://example.com/docs

api-reference: https://example.com/api

The hope is that AI agents would fetch /llms.txt and use it as a starting point for understanding the site.

However, real-world traffic tells a different story.

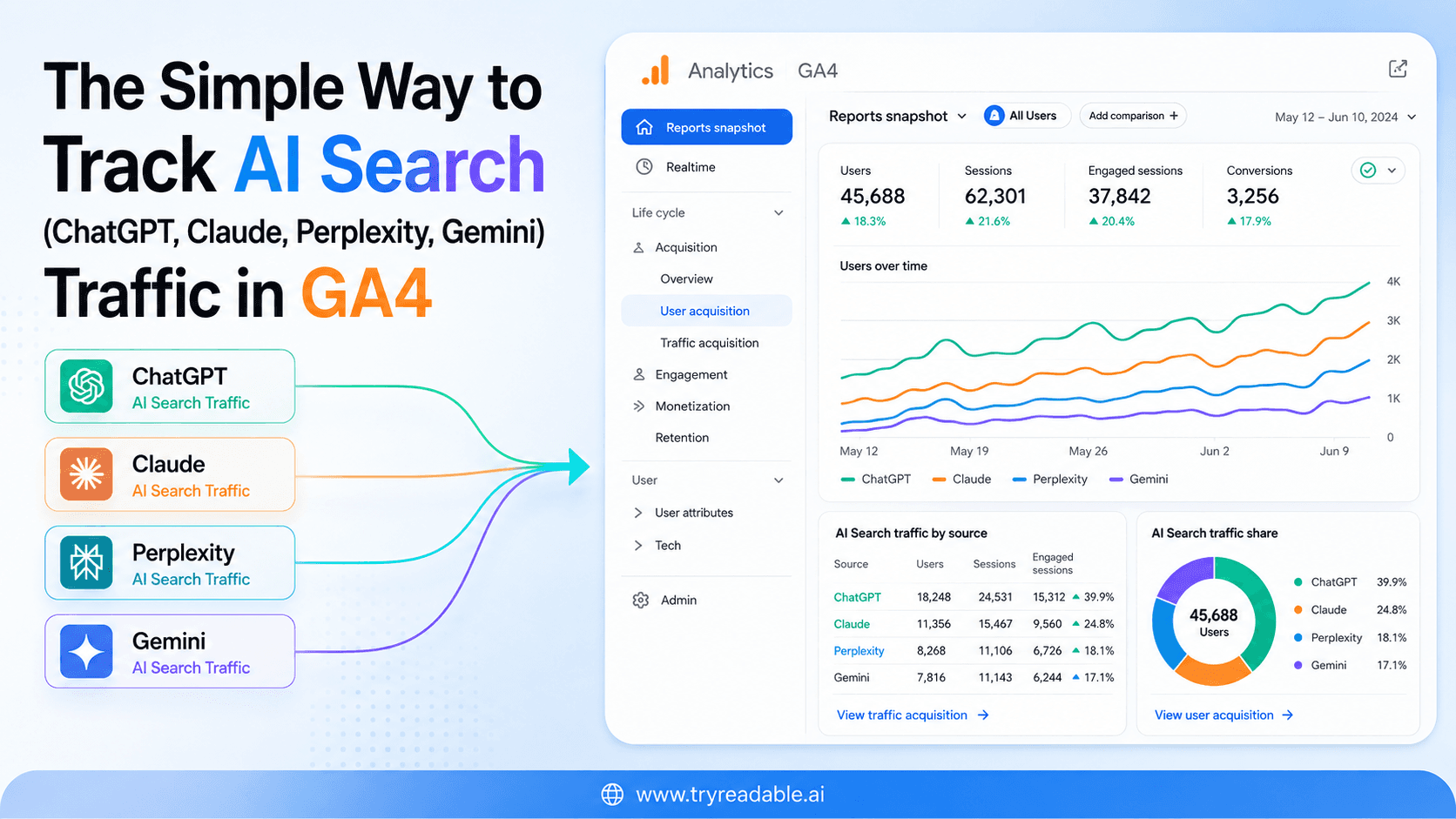

Our dataset

Our observations come from traffic data across Readable customers’ websites.

| Metric | Value |

| Websites monitored | 100+ |

| Monthly AI agent requests | ~2 million |

| Time window | Multiple months |

| Agents observed | ChatGPT, Claude, Perplexity, and others |

The traffic includes requests generated by:

- LLM browsing tools

- AI search assistants

- automated agent workflows

- AI-powered developer tools

These agents fetch normal web pages while answering questions, researching topics, or retrieving documentation.

Why are we confident in this data

One common question when analyzing traffic logs is: Are you actually seeing all requests?

In our case, yes.

Readable sits at the CDN edge, meaning every HTTP request passes through our infrastructure before reaching the website origin.

For each request, we capture:

- full request path

- user agent

- headers

- IP

- response metadata

This includes all types of traffic:

- humans

- search engines

- AI agents

- bots

- scrapers

Importantly, this happens before application servers or origin infrastructure.

So if an AI agent attempted to request:

/llms.txt

we would see it.

Across millions of AI-agent requests, we observed zero such requests.

What AI agents actually request

Instead of llms.txt, AI agents mostly behave like normal web clients.

The most common requests we see include:

- /

- /docs

- /pricing

- /blog

- /features

- /sitemap.xml

- /robots.txt

In other words, agents usually fetch normal website pages and parse them directly.

This aligns with how many AI systems currently retrieve information:

- search the web

- open relevant pages

- extract useful content

- summarize it

In that workflow, a special discovery file like llms.txt may not be necessary.

How LLMs currently explore websites

Based on the traffic patterns we observe, most AI agents follow a process similar to this:

Step 1: Find a page

Usually through search engines or internal knowledge.

Step 2: Fetch the page

Agents request the full page HTML.

Step 3: Extract text

They parse the page and extract readable content.

Step 4: Follow links

If needed, they fetch additional pages linked from the original page.

This means LLMs are currently relying more on page readability than on dedicated metadata files.

Why llms.txt might not be used (yet)

There are several possible explanations.

1. The standard is still experimental

llms.txt is a relatively new proposal and has not yet been widely adopted by AI companies.

Most LLM systems likely rely on existing web discovery mechanisms.

2. Many agents use search engines

A large portion of AI-agent traffic originates from systems that first use:

- Bing

- internal search indexes

In these cases, agents already know which page they want to fetch.

They don’t need a discovery file.

3. Agents simply parse the page

Modern LLMs are very good at extracting information from:

- HTML

- Markdown

- documentation pages

- structured headings

Because of this, they can often understand a site without additional guidance.

What actually helps LLMs understand websites

From observing AI-agent traffic and behavior, several patterns are becoming clear.

Websites that are easier for LLMs to understand tend to have:

- clean HTML structure

- clear headings

- documentation-style content

- minimal client-side JavaScript

- readable text content

Pages that rely heavily on:

- complex JavaScript rendering

- deeply nested UI components

- text embedded in images

are much harder for AI agents to interpret.

In practice, content readability matters far more than discovery files.

The bigger shift: AI-readable websites

The web has historically been designed for human browsers.

But AI agents are quickly becoming another major consumer of web content.

This raises an interesting question:

Instead of adding files like llms.txt, should websites focus on becoming more AI-readable by default?

Some emerging approaches include:

- markdown-based content

- simplified HTML

- structured documentation

- AI-optimized content representations

These approaches improve how both humans and machines understand the web.

We’d love to hear what others are seeing

Our observations are based on millions of AI-agent requests, but the ecosystem is evolving quickly.

If you operate:

- large websites

- developer documentation platforms

- AI infrastructure

- crawler systems

We’d be curious to know:

Have you seen AI agents request /llms.txt in real traffic logs?

The idea behind llms.txt is interesting — but based on current traffic patterns, it does not yet appear to be used by major LLM systems.

Continue in Docs.